Venting to a Bot: Gen Z’s New Therapist

By Author and Translator Serena Bader

At 2 a.m., feeling stressed and overwhelmed, you open your phone. you could text your group chat. You could text your therapist. Instead, you type into ChatGPT: “Why do I feel like this? What should I do?”

You’re not alone. More and more, Gen Z is turning to chatbots for answers…and for comfort.

Back in the day, therapy happened in quiet offices, behind closed doors, at carefully scheduled times. Now, it’s happening on phones, in chat windows, and often with no human presence at all. For Gen Z, turning to Chat GPT for mental health support isn’t just a trend, it’s a reflection of what they need: honest opinions, quick answers, and affordable solutions.

Gen Z’s Bestie. Kinda Their Therapist, Too

Gen Z doesn’t see Chat GPT as a bot, they see it as a best friend. Someone (or something) they can talk to at any hour, ask for advice, and vent to with no fear of being judged by anyone. It’s not a certified therapist, but maybe that’s what makes it so comforting. ChatGPT doesn’t have a paper and a pen, doesn’t set appointments, and never asks you for money. It simply responds fast, consistently, and with opinions when asked.

In a world where mental health care can pretty hard to get to, ChatGPT has quietly become a comfort zone. It’s like a diary, if your diary could talk. For Gen Z, this isn’t about replacing professional help. It’s about finding something that meets them anywhere: on their phones, in real time, when life feels overwhelming.

What are they talking about? It’s not always the big, life-changing stuff. Sure, some users ask about deep situations, relationship struggles, or moments of anxiety, but often, it’s about the small, day-to-day details. “Is it okay to feel like this?” “How do I stop overthinking?” “What should I text them back?” These aren’t necessarily questions that need a full therapy session, but questions that need reassurance in the moment.

It’s not a space that adds pressure, it’s low-key, low-stress, and makes you feel comfortable enough to talk about your problems freely. Sometimes, when you’re talking to a friend or even a therapist, there’s this thought that sneaks in: “Am I bothering them with my problems?” or “I don’t want to force them to listen.” With ChatGPT, that pressure disappears. You can vent, overthink, ask, and leave on your own terms.

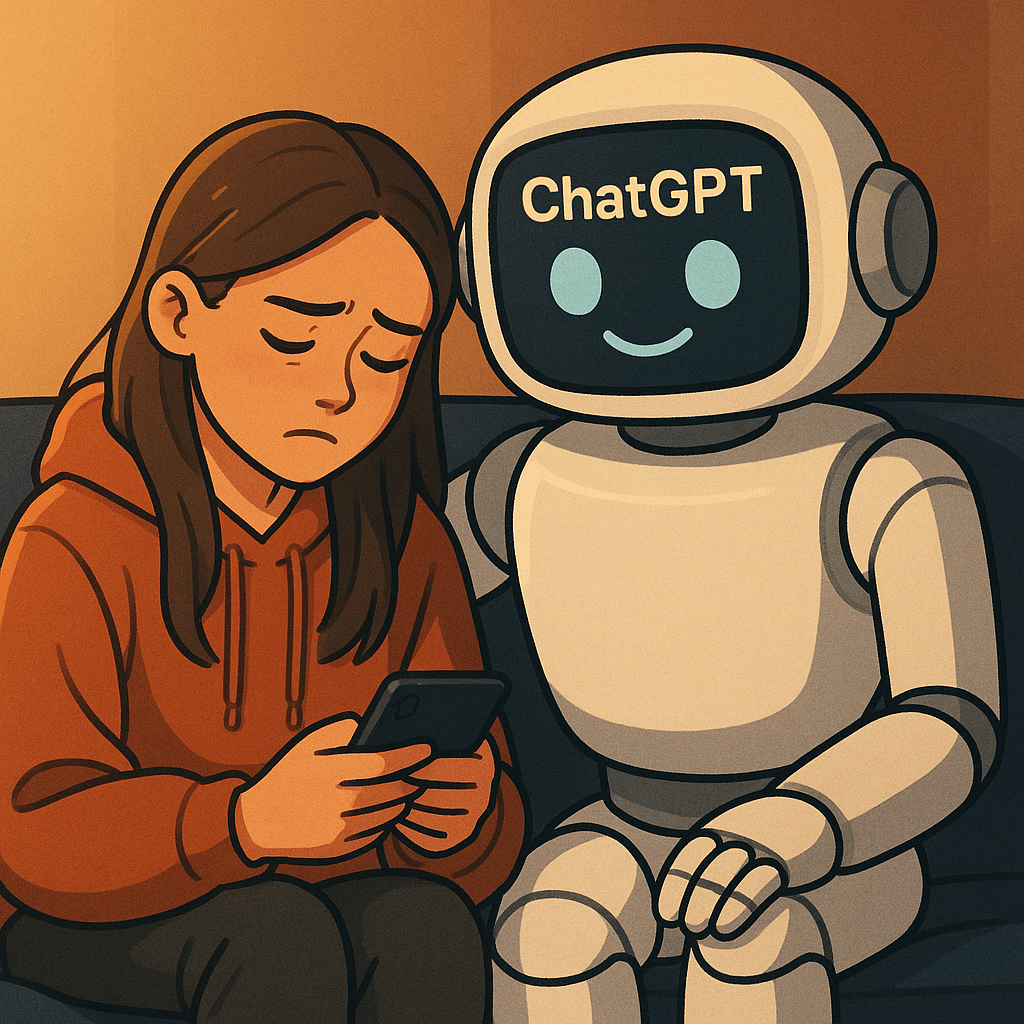

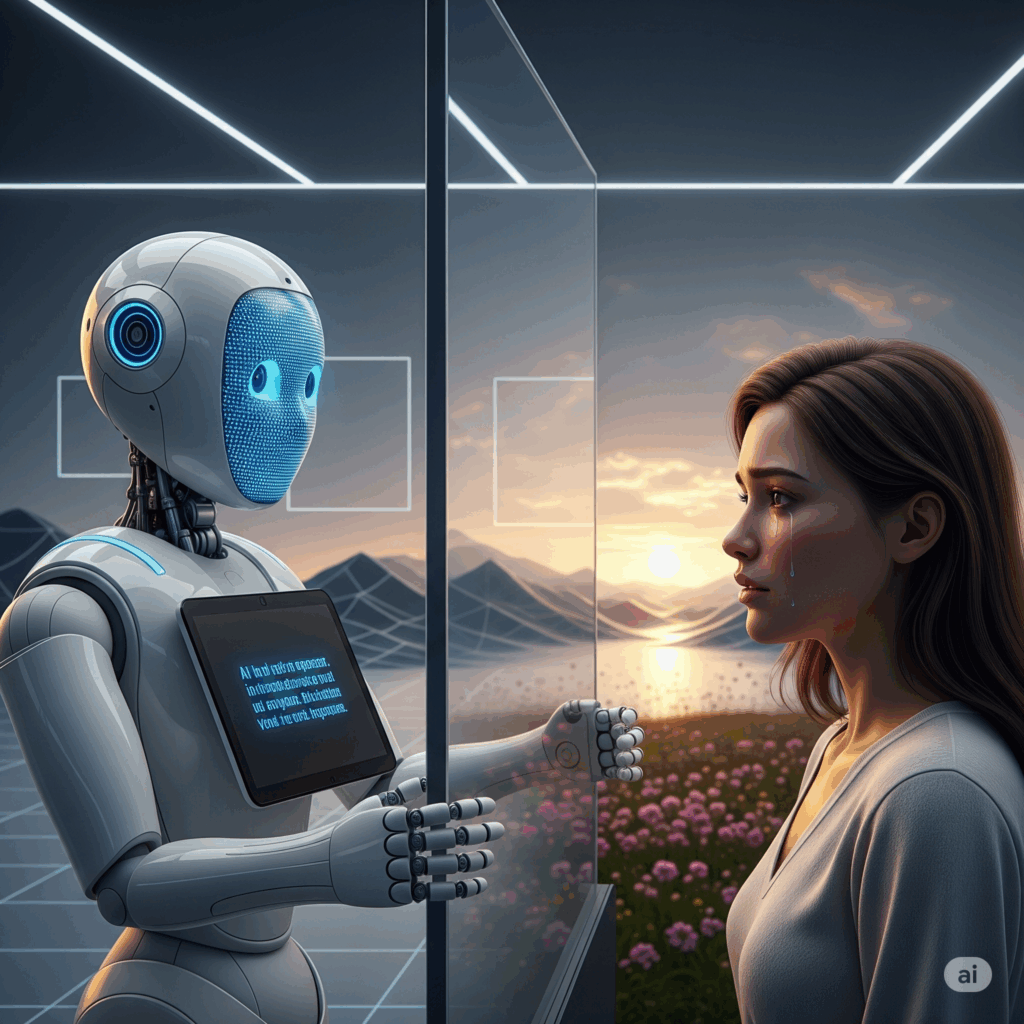

A young Gen Z woman finds comfort in ChatGPT, portrayed as a friendly robot offering support while she vents on her phone.

AI Therapy’s Hidden Danger

It’s important to remember that AI isn’t human. Unlike a trained therapist, it lacks empathy, emotional intelligence, and the ability to read the body language and facial expressions of the person to see what’s really going on. A 2023 study by Frontiers in Psychology found that while AI chatbots can provide basic emotional support, they often fail to recognize complex mental health conditions, potentially leading users astray.

This lack of nuance means AI can unintentionally offer advice that can be harmful. For example, if someone expresses suicidal thoughts, most chatbots, including ChatGPT, aren’t equipped to intervene properly or automatically connect users to emergency resources. This can be dangerous, as about 80% of young people with mental health conditions don’t receive professional care, according to the National Alliance on Mental Illness (NAMI).

AI therapy can give a false sense of security. Those quick, comforting replies might make people feel like they’re on top of things, when really they’re just putting a fresh coat of paint on old damage. Real healing takes human connection, personalized care, and time, things that no AI can truly provide.

That said, AI isn’t inherently bad. It can be a helpful first step or an additional tool for those who can’t access therapy easily. But it’s crucial to understand its limits. For Gen Z and beyond, the message is clear: Chatbots can’t replace therapists. They’re a starting point, not the finish line, in mental health care.

A distressed human seeks genuine connection beyond the cold, digital comfort offered by an AI, symbolizing the irreplaceable need for human empathy in true healing.

AI chatbots are here to prove how technology can evolve, transforming how new generations approaches mental health. They offer immediate comfort and accessibility in ways that weren’t possible before. But real healing happens through genuine human connection: friends who listen, therapists who understand, communities that support.

Balance is key, AI is a helpful tool but not to the point of replacing human relationships. Chatbots can be there in those lonely moments when reaching out feels too hard. But lasting support comes from people who truly know you, who stick around through the ups and downs, and who offer more than just quick replies.

At the end of the day, healing isn’t just about getting answers, it’s about feeling heard and understood by someone who really cares. AI can be there when you need to talk, but it can’t replace the comfort of a real person who sticks around when things get tough. For Gen Z, the trick is figuring out how to use technology without losing the human connections that truly keep us going.

About the Author

Serena Bader is a translation student at Université Saint-Joseph (USJ) who loves writing about the things everyone’s talking about: from social trends to the way technology shapes how we live and connect. She’s curious about how Gen Z thinks, feels, and vents (sometimes to chatbots). Serena has a soft spot for stories that mix real-life struggles with what’s trending. When she’s not writing, you’ll probably find her scrolling or translating.